If you’ve been on social media – namely Twitter – you’ve dealt with it. You add a comment to a thread, and some random account you’ve never seen before replies with “you’re a racist” or “shut up you poopyhead.” It throws you off balance, you’re not sure how to respond, you often wind up responding in kind, and it generally throws off your day.

The real question is, do you even respond to these people? Or “NAAH”?

In other words, how do you know there’s an actual person behind the account? It’s no secret Twitter has hordes of bot accounts – people who set up multiple accounts, and pursue a particular algorithm with them, to push and amplify a particular message. It’s a particularly noxious form of Astroturfing that needs to be curtailed.

Fortunately, we can adopt what we’ve learned from spam filtering in e-mail systems, to the Twitterverse. I’ve developed what I call the “NAAH” algorithm. NAAH stands for the four qualities that lead me to believe I’m dealing with a bot: New, Anonymous, Automated, Hostile.

In the spam filtering industry, we tend to quantify these things into a Spam Confidence Index (SCI) and then users can decide at what score to filter any incoming message as spam. I’ve simplified my algorithm to just use yellow and red flags.

Red flag: an indication that it’s probably a bot in violation of Twitter’s Terms of Service (ToS). You should block and report.

Yellow flag: probably a jerk you should block, but doesn’t rise to a ToS violation. Don’t report.

One flag: An indication but not necessarily a problem

Two flags of the same color: you’re probably justified acting on it

Three or more flags of the same color: definite red alert.

- New: bot accounts are frequently blocked and have to create new accounts to keep up their activities.

- Total number of followers they have, plus the number they are following (you can weigh followers more than following):

- Under 30: red flag

- Under 200: yellow flag

- Joined date

- Less than a month: red flag

- Less than six months: yellow flag

- Total number of followers they have, plus the number they are following (you can weigh followers more than following):

- Anonymous: for obvious reasons. A legitimate human who wants to be heard on Twitter will say something about who they are. A bot won’t. Qualifier: some accounts are legitimately anonymous. Those will give it to you if asked. See Hostile.

- No human face, or obviously wrong: red flag

- Very old pic: yellow flag

- Description: doesn’t give some indication as to who the person is, untraceable: yellow flag

- Anime pic: BLACK FLAG FOR THE LOVE OF GOD BLOCK AND REPORT

- Automated: bot accounts are not 100% computer generated. They’re generally created by human beings which follow an algorithm that’s easy to automate: create an account, follow a ton of people that are either high profile or have a high followback rate, attack people who contradict your narrative.

- Account name has a string of numbers: red flag. Someone’s doing the minimum work to create an account.

- Followers they have, divided by the number they are following

- 10 or more: red flag. This is a sign they’re following as many as possible with the hopes of a follow back

- 2 or more: yellow flag

- Check their timeline:

- All tweets are forwarding viral content: red flag

- All replies are hostile replies to others: red flag

- Profile description:

- Obvious political agenda like #resist or #impeach45: yellow flag. On the flip side, if you’re on the left and are getting blasted by MAGA people (I don’t have this problem), you can expand this to #MAGA and other hashtags.

- No/minimal description: red flag

- Hostile: human beings have a sense of reason and attempt to relate to each other. Bots do not. Think of the army of the undead or a rabid dog. They have one singular purpose – attack the living.

- If they do not respond when you reply: red flag. This means they are automated and are off to insult the next guy on the internet.

- Respond off the bat with a hostile comment: red flag

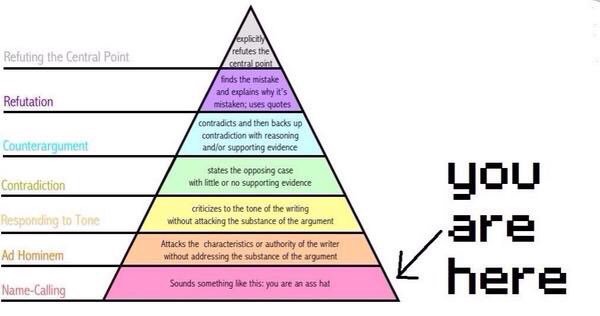

- Responds with an argument – categorize into the following argument pyramid meme:

The argument pyramid – an evergreen meme. Name-calling/Ad hominem: red flag. Any bot can call you a poopyhead and move on.

Responding to Tone/Contradiction: yellow flag. A good faith human will explain to you why they think you’re wrong. They won’t just tell you you’re wrong and expect you to do the research for them.

“But I’m for real!”

Keep in mind this is an algorithm, and all algorithms have a possibility for false positives. This doesn’t mean the algorithm is bad. We need the algorithm because the number of cases we deal with is overwhelming for us as human beings, and we need some automated guidance to deal with them.

That being said, if you’re a human being, new to Twitter, and you’re getting blocked by this algorithm, take it as a moral lesson: you’re being a jerk and people don’t want to talk to you. If you want to build up your “prestige” on Twitter and be taken seriously, there’s some simple rules to follow:

- Don’t go following a ton of people immediately. Show some discretion on who shows up in your feed. Following people to get followers is fine, although a bit gauche.

- Don’t go insulting people, especially verified accounts who’ve been around a while.

- If you think someone is wrong, explain why. Do some research on why you’re right. Don’t expect others to do the lifting for you.

- Say nice things. Acknowledge when others are right. Think of the Buddhist maxim for mindful speech. “Is It True? Is It Necessary? Is It Kind?”

Based on the long term, overall application of such principles, Twitter has evolved into a natural feudal “prestige” system. Accounts who’ve been around a while and have earned a trustworthy name get followers who trust them – and bots who try to detract. A good way to build up your reputation is to help defend the positions of such accounts, as they’re overwhelmed with hostile bot replies.

Of course, these things take time and effort. Which is why no bot will go through the trouble.

Conclusions

Twitter claims that it filters out bad actors and fake accounts in the name of raising the overall health of the conversation. While there is something to be said about that, it’s no secret they allow hordes of leftist bots to run their website. It may be the high profile accounts they ban which get them the most scrutiny, but these harassing bots are where the real editorializing takes place.

As they come under increasing scrutiny, it’s certainly possible they could be investigated for this. But this behavior would require a subpoena into their algorithms, and a deep dive by expert witnesses into exactly what goes on behind the curtain. Needless to say, this would be a very late stage in the game, and will be fought by Twitter management tooth and nail.

So until then, enjoy this system that an honest platform would already employ. Tweak it to your liking, and see how much it improves your experience on Twitter. I promise it will improve it significantly.